Everyone wants to know the big secret for getting better results from their email campaigns — more sales, more bookings, and more engagement.

To improve your results, you can follow best practices, incorporate strategies, and even follow trends, but if you don’t have a good understanding of what your audience responds to, you could be wasting your time.

Why? Your business and subscribers are unique. You need to send emails that catch their attention, and what may work for your peers and similar businesses may not work for you.

You need a way to know what works best for your audience so you can send emails that connect and engage them.

That’s where A/B testing comes into play. Once you know what A/B testing in email marketing is, you’ll have a better idea of how it works and how you can use it in your strategy to test different variables to find what works best for your audience. This will help you optimize your emails over time.

What is A/B testing?

A/B testing is an experiment where two or more versions of something are shown to users randomly, and the results are analyzed to determine what worked and what didn’t.

It is a tool that’s often used in marketing to test landing pages, websites, layouts, emails, and more.

What is A/B testing in email marketing?

A/B testing in email marketing is where you test different variables in your design, header information, or strategy to determine what your subscribers respond to best.

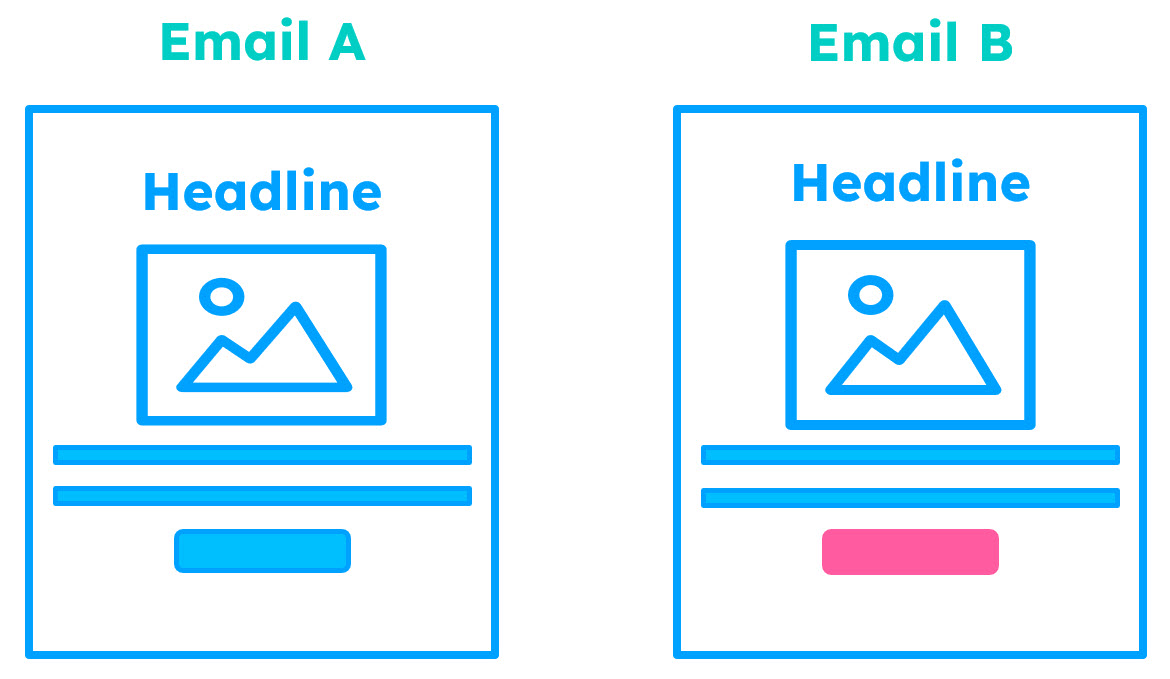

Essentially, you’ll split your list in two and send two slightly different emails to each list. You’ll send Email A, your control, to List A and Email B, with one variable different, to List B.

Once the results have had time to trickle in, it’s time to analyze the outcome to determine what worked or didn’t work.

Why you should be using A/B testing in your email marketing

A/B testing allows you to make impactful changes to your emails by finding elements and strategies that catch the attention of your audience and ultimately impact business results.

When used over time, testing allows you to gain insights about your subscribers and what they respond to so you can send more effective emails that your subscribers want to read.

You’ll use data about your emails to pinpoint and create benchmarks on the variables that have a positive or negative effect allowing you to create better emails over time for your audience — ultimately optimizing your emails for more opens, clicks, engagement, and conversions.

No matter the business or nonprofit organization, everyone should use A/B testing to learn more about their audience.

How to A/B test your email campaigns

Once you have a good understanding of how A/B testing works and the steps to take, it’s fairly simple to set up an A/B test.

Having a plan goes a long way to ensure your test is successful. So spend a few minutes upfront determining the details to ensure you’re test runs smoothly and that you can analyze the results fully.

First, decide the objective or goal of your test. This is where you decide what it is you want to learn or what metric to improve.

Consider improving metrics such as increased click-through rates, conversions, revenue, event registrations, bookings, and referrals or reducing bounces.

Your goal will help you develop a hypothesis or assumption on what you think is going to happen, such as: “animated GIFs in my email will catch their attention and generate more clicks and conversions for our promotion.”

Another important element for your test is to pick one variable that you’re going to test. The variable is one element you’ll change in your email to try to impact the metric you decided on. This could be something you add to, or change, in your email.

Choosing only one variable will help you determine the impact of the change you made to your email. If you make more than one change, you won’t know which variable or element affected the results.

The last element you’ll need to consider is who you’re sending to and how you’ll be splitting your list.

If you’re just getting started with A/B testing, splitting your list 50/50 is a good place to start. This means that half will get Email A (your traditional email, also called a control because no changes or adjustments are made to the original format), and the other half will get Email B, the one with the variable changed.

You could also think about splitting your list another way, such as 25/25/50, where only half of your list is part of the testing. This means twenty-five percent will receive Email A, and another twenty-five percent will receive Email B. Once the results have had enough time to process, you’ll determine a winner and send the remaining fifty percent of the list to the winning email.

What to A/B test (the variables)

The variable is the one element you’ll want to change or test in Email B.

There are a variety of things you can test for your email marketing strategy, and eventually, you may want to test different variables to see their impact. Just remember to test them one at a time.

Here are a few variables you should test:

Header information

If you’re running your first A/B test, experimenting with an element of your header information can be a good place to start. You could try different types of subject lines, preheader text, or even the From Name or email address.

Your subject line is one of the best places to start, as it impacts readers’ impressions of your email right from the inbox. It’s also one of the easiest elements to test.

You could test different keywords or power words to catch their attention.

You could even try out a different subject line or preheader text style to see if one works better than the others.

For example, you could test:

- Asking a question

- Using emojis

- Using alliteration where you use the same letter in each word (Example: Six seasonal saving secrets)

- Writing in chunks or incomplete sentences (Example: New leggings. Soft & comfy.)

- Using allusion where you refer to pop culture or famous lines (Example: We know what you did yesterday…)

- Telling a joke and making them laugh (Example: Which dog breed is Dracula’s favorite?)

Another element to consider testing is your email’s From Name, as it is one of the first things people will look at. When they recognize the sender, they’re more likely to open and take the action you want them to take.

Most importantly, the From Name needs to be recognizable, so you could test using your business name versus an important person that people recognize within your company to make your emails feel more personal. Maybe that’s the name of your CEO, founder, or even the person who greets everyone at the front desk and on the phone.

Consider testing a combination of a person’s name with the company in the From area to see if one has more of an impact on your readers.

You could also do a similar test with the From email address of your email. You could use a generic From email address such as frontdesk@mybusiness.com versus susan.b@mybusiness.com.

Test the design and layout of your email

If you’ve been sending emails for a while, you can test a refresh of your email’s design or template.

Something new and fresh may just catch their attention and encourage them to continue reading and take action.

Email design A/B test example:

The team at Litmus wanted to see whether a newer template with more design elements would convert better than a simple text email in an older template.

They hypothesized that a design switch would help subscribers better understand the purpose of the email and the action they needed to take, resulting in more conversions or people wanting to continue receiving their emails.

However, they found that for their particular audience, the original design fared better than the proposed update.

That’s why A/B testing any proposed change is so important. You want to be certain the change is going to have a positive impact on your audience before you alienate them with a change they don’t like.

Experiment with email layout and content flow

Another idea is to experiment with the idea of different layouts for different types of emails and flows that can impact the order in which people read your emails and see the content.

I’m a big fan of the inverted pyramid layout for most emails where I can have one call to action. However, if I’m featuring multiple topics, products, or services, I like the idea of the Z-pattern or the F-pattern. But it’s a good idea to do some testing to see if one works better for you.

Another option, depending on the goal of your email and test, is to experiment with standard body copy in a branded template versus a text-only email.

Test the content of your email

There are also various elements within the body of the email to test.

You could write two different versions of your email with slightly different body copy, tone, or even length to see which produces better results.

In addition, imagery is essential in just about any email. But what type of imagery do your subscribers respond best to? Test it!

You could test standard product images in your email versus lifestyle imagery of someone happily using your product or service. You could also experiment with a static image versus an animated GIF to see whether the movement can better capture the attention of your readers.

Example of an A/B test on static imagery versus an animated GIF:

Because animation is often thought to generate engagement while grabbing attention and the readers’ eye, the team at Litmus wanted to understand whether the movement of a GIF would increase their click-through rate.

They hypothesized that the group receiving animation would be more likely to click in the hero area, specifically the hero image with animation.

In the end, the team found that in regard to their audience, the animation only marginally improved conversions.

If the focus of your test is to improve your email’s conversions or revenue, another variable to consider is adjusting your email’s call to action. Try changing the color or design of the button or even changing the text that people click on. For example: “get tickets” versus something more punchy like “save my seat.”

Test the best day and time to send

In order to get people to open an email, it’s ideal to send on a day and time when they’re likely to pay attention and have time to read it. Therefore, the send time can have a big impact on whether they open and engage with your email.

But when is the best time to send it?

It depends on your audience.

For instance, if your audience includes teachers, sending during the school day may not be a good option as they are busy teaching in the classroom. You could try sending your email after school hours, early in the morning before classes start, or even on the weekend to see the impact.

Start with an idea of who your audience is and what their day is like so you could pick new days and times to test in your strategy.

Learn from your tests and improve your results

After you’ve sent your emails, you’ll want to give it some time for the results to come in.

Most people will open your email within 24 hours, but you might give it another day or two for any stragglers.

When reviewing the results, refer back to your initial goal to know which metrics you should be paying special attention to.

You’ll find a wealth of information within your email marketing reports from your service provider, such as sends, opens, clicks, bounces, and unsubscribes.

While it’s important to pay attention to the metrics that impact your initial goal, it’s essential to look at all the metrics your test may have impacted — good and bad. For instance, you’ll want to determine if a test could have caused more emails to bounce or more people to unsubscribe.

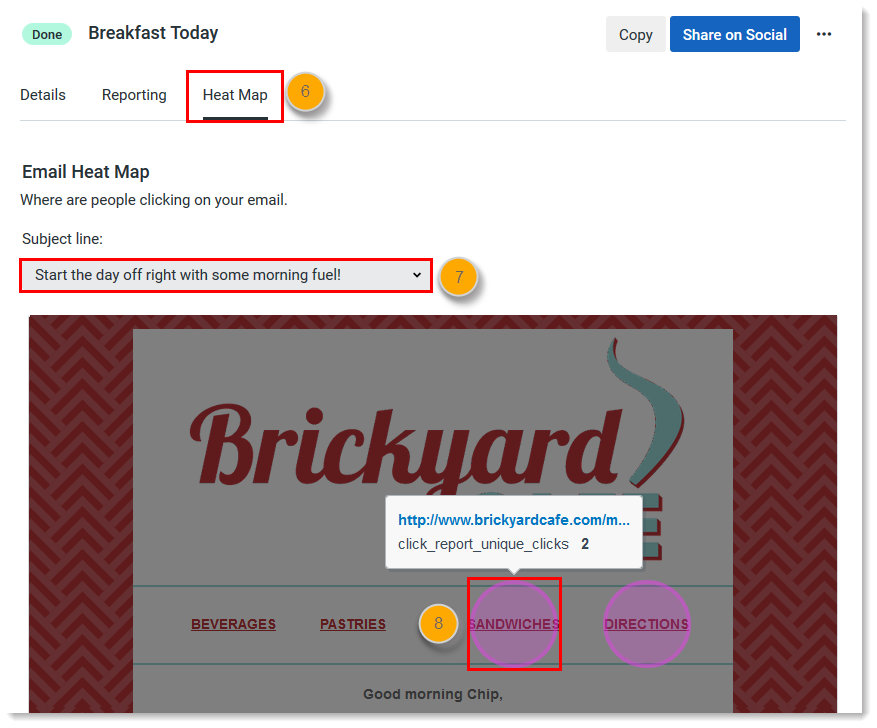

In addition, you’ll want to review what people clicked on in your email. Did they click the main call to action, or did they click any other secondary links?

Remember, opens and clicks are only a starting point to understanding your test results. It’s important to look beyond just the standard metrics; you’ll want to look at the impact on conversions which means you may need to go into your ecommerce tool or look at your website analytics to determine conversions and revenue generated from each email in your test.

Even if you’re only A/B testing subject lines, you’ll still want to look beyond those metrics. No one can take the action you want them to take without first opening the email.

Once you’ve looked at your metrics, think about any outside factors that could have skewed the results. Was there a holiday where more or fewer people could have engaged, or was there some sort of current event that may have impacted the results?

If you think your results may be skewed for any reason, continue testing.

Once you’ve reviewed your metrics, determine which email performed better and make a decision on whether further testing is needed.

If the sizes for lists A and B are smaller than 1,000, you won’t have statistical significance, so it’s possible that your results may be skewed just by chance. It’s still a good idea to run tests, but in this case, you’ll want to run the test a few more times to be sure of the results.

I suggest creating a spreadsheet to document all of your email tests. Record the variables used, and the results, so you can make quick decisions on future email campaigns as well as further testing.

This also allows you to set benchmarks for future tests and your overall strategy.

Build on what you learn from A/B testing

Once you’ve analyzed the results, utilize that information to better your email marketing strategy.

You may decide that there are certain strategies or elements to stay away from and some to use more.

It’s also important to continue testing other variables over time.

If the last few years have taught us anything, consumer preferences and trends are changing all the time. Continue testing the same ideas every once in a while to determine if any strategy changes should be made.

If your strategy includes different types of emails, such as newsletters, promotional emails, and informational or educational emails, it’s a good idea to perform separate tests for those as well.

People may react differently to different types of emails.

Run your next A/B test

By now, you know what A/B testing in email marketing is, and you know that it is essential if you want to take your email strategy to the next level and send emails that your subscribers engage with.

Start by understanding your goals, and then you can choose one variable to test at a time.

If you’re new to testing, start with different subject lines.

Then you can use your data to set benchmarks and document your a to b testing over time. Know what you’ve tested and how it went so you can decide on your next test to improve your strategy further.